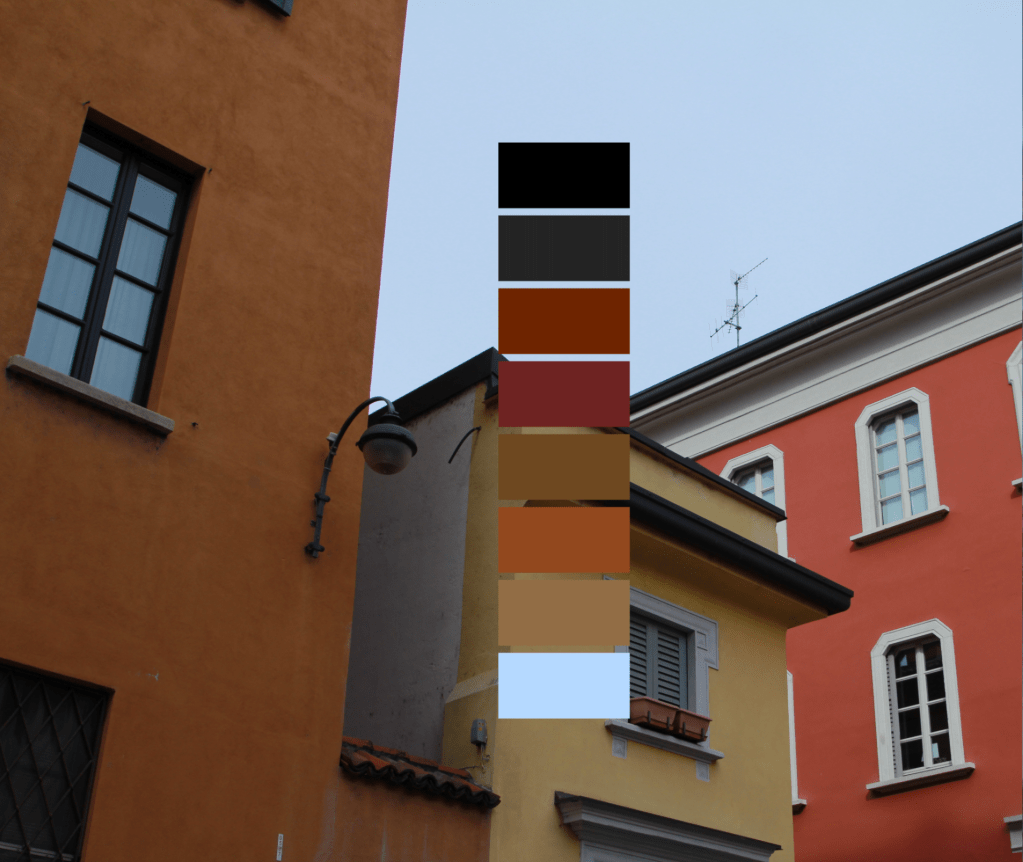

I began an experiment in 2015 as a way to stretch my legs in the Processing programming language. There was a script (from this website, link since broken) that counted all of the different pixels in a given image and displayed the results in a sequence of colored squares. By fiddling around with the code, one could customize the number, size, and positioning of the squares within the frame. The term “palette” seemed to fit the output well, and I liked that the word didn’t just mean a collection of colors, but could also be used to describe the object upon which those colors are mixed together. I posted the results on Instagram.

On one hand it’s a deceptively concrete exercise. Think about everything that’s captured within your field of vision when you conjur up your memory of a place. I was intrigued by the idea of deriving objective, “solid-feeling” data from an inherently subjective and fleeting view – seeing the world like a machine, counting the colored pixels in a memory and chunking them into elegant squares to present a tidy visual summary of a context’s genius loci.

On the other hand, datasets are just as informed by what they omit (and who’s doing the omitting). Things that are cropped out and relegated to beyond the frame are often just as important as what remains in. This goes as much for the events of a place as for the location in which those events transpire (and is why “rosy retrospection” is a thing).

It felt like an intriguing contradiction to derive such a concrete calculation of a context’s “palette” after having spent only a short time there, and after creating these, I often wondered how someone native to that place would’ve taken issue with either the image I chose to represent their home, or the colors of the resulting computer-generated palette.